Is Your AI Quietly Leaking the Data You Fed It?

Most organizations have no idea this risk exists. The ones that do usually find out the hard way. Your AI was trained on real data. Customer records. Financial transactions. Internal communications. Sensitive material that was never meant to leave your organization.

That data did not disappear when training ended. It got encoded into the model. With the right prompts, fragments of that training data can surface in model outputs . In 2023, researchers led by Google DeepMind showed that by spending just $200 querying ChatGPT, they could extract over 10,000 verbatim memorized training examples : including real names, email addresses, and phone numbers of private individuals. This is not theoretical. This is a documented attack method with published research behind it. The question is not whether the risk exists. The question is whether your organization has any controls around it.

Most do not. Not because of negligence. Because the security frameworks most teams rely on were written before modern AI existed. They were not designed for training pipelines, model outputs, or AI-specific failure modes.

The regulatory clock is already running

The EU AI Act is in force. Frameworks across the Middle East are following close behind. Enterprise procurement teams are now asking direct questions about AI governance before contracts get signed.

If you cannot show documented controls and oversight processes, you are not just unprepared for an audit. You are losing deals. The organizations that get ahead of this will be stronger across sales, compliance, and operational risk at the same time.

What ISO/IEC 42001 actually addresses

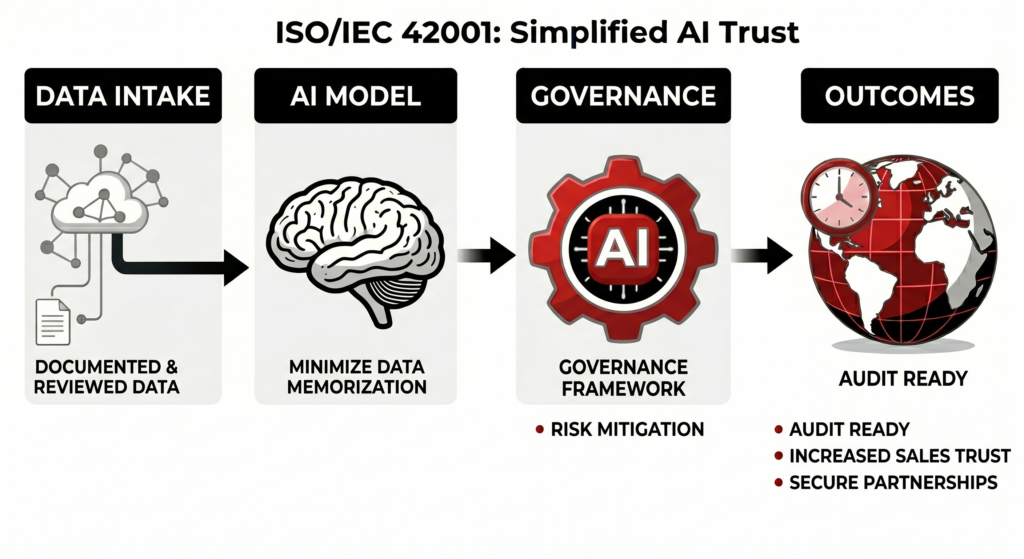

ISO/IEC 42001 is the first international standard built specifically for AI management. Not cybersecurity stretched to cover AI. A framework designed around how AI systems are actually built, deployed, and governed.

It covers the full lifecycle. Where training data comes from. How model decisions get reviewed. What happens when something goes wrong. Three gaps come up most often.

Training data nobody documented. Most data governance programs stop at the database. The standard pushes that into the training pipeline. What went into the model? Was it appropriate? Is there a record? These questions rarely have good answers yet.

Oversight that lives in policy, not in practice. The document says someone reviews sensitive outputs. The actual process does not enforce it. The standard requires you to show the line exists and that your process holds it.

No response plan when the model breaks. Models drift. They produce unexpected outputs. They can be manipulated. Most teams discover this during an incident. ISO/IEC 42001 treats AI failures as something you prepare for, not something you explain afterward.

Take the Next Step

Every day without documented AI controls is a day your training data sits exposed. Unmonitored. Unaccountable. And fully outside the requirements that regulators are now enforcing.

ISO/IEC 42001 is not just a standard. It is your compliance machine. It turns scattered AI risk into structured governance. It gives your legal team something to show. It gives your procurement reviewers something to trust. It gives your leadership a defensible position when the questions come.

The questions are already coming.

Your competitors are not waiting. The organizations moving now will walk into client meetings, audits, and procurement reviews with documentation in hand. The ones moving later will be explaining why they did not.

Do not wait for a breach, a failed audit, or a lost deal to find out where your AI systems are exposed. Schedule your ISO/IEC 42001 readiness assessment with Kinverg today and find your gaps before someone else does.